Abuse of Science: Extreme Event Attribution Studies

/As its header suggests, this blog’s purpose is to combat today’s abuse and rejection of true science – the hallmarks of which are empirical evidence and logic. These two pillars are being outrageously abused in climate science by the ever-increasing use of extreme event attribution studies, which attempt to describe the extent to which specific extreme weather events are influenced by anthropogenic climate change.

Both the mainstream media and government reports have latched on to event attribution to fan the flames of climate alarmism and the associated narrative that we are in a climate crisis. But such studies are deeply flawed, with errors in both science and interpretation, having been created for legal and political rather than scientific reasons.

One of the fundamental failings of attribution studies is the logical fallacy of “begging the question.” To estimate the effect of a particular extreme event, the studies need to replicate the climate without the impact of global warming.

But this ignores the role of natural variability, which is neglected in climate models used in attribution studies on the assumption that only anthropogenic CO2 – with no contribution from natural sources – accounts for all current warming. If natural sources play no role in warming today, then their contribution to a preindustrial climate is unknown.

Other scientific shortcomings are a lack of appropriate peer review, faulty statistics and the ignoring of important evidence. Defective methodological practices include the improper use of temperature datasets, a lack of sufficient attention to uncertainties in the data, and the neglect of historical records.

In a new GWPF report, I’ve discussed several recent attribution studies in detail. One example is a heatwave concentrated in the U.S. southwest, Mexico and Central America in May and June 2024, which generated alarmist media headlines proclaiming the event “35 times more likely” than before. The media reports were fed by an attribution study conducted by the Grantham Institute at Imperial College, London.

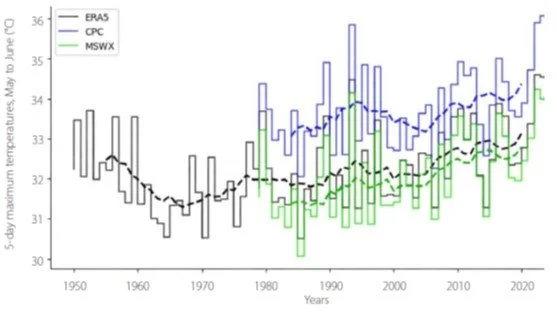

Apart from the general limitations of attribution studies, the absurdity of such a claim can be seen in the conclusions drawn from the temperature datasets employed. The figure below shows the May to June maximum of 5-day maximum daytime temperatures in the study region from 1950 to 2024, for all three datasets; the dashed lines are 10-year moving averages.

To begin with, the study’s estimate of the region’s 2024 heatwave being 35 times more likely than in the preindustrial past is an average estimate for all three temperature datasets. But its estimate for only the ERA5 data (the European Centre for Medium-Range Weather Forecasts 5th generation reanalysis product), which includes observations going back to 1950 rather than only 1979 in the other two datasets, is a much lower 13 times more likely.

Another reason this attribution study is in error is that it does not emphasize the uncertainty involved. It can be seen from the figure that when uncertainties in the temperature measurements are taken into account, a heatwave possibly comparable to 2024 occurred in the early 1990s; the temperature uncertainty is indicated by the height of the boxes for each data point.

A second example is a devastating flash flood that swept through holiday camps and homes in central Texas on July 4, 2025. An attribution study conducted by ClimaMeter, a French counterpart of the UK’s Grantham Institute, concluded that “Natural variability alone cannot explain the changes in precipitation associated with this very exceptional meteorological condition.”

ClimaMeter attributed the catastrophic flooding to two factors: a purported temperature increase of up to 1.5 degrees Celsius (2.7 degrees Fahrenheit) in the flood-affected area, from the period of 1950–86 in the past to the more recent period of 1987–2023; and present-day rainfall up to 2 mm (0.08 inches) per day, or up to 7% wetter than in the past, in parts of central Texas.

But these assertions don’t stand up to scrutiny. First, the reference period of 1950–86 includes 25 of approximately 35 years of global cooling from 1940 to 1975, so that the estimated temperature increase for central Texas between 1950 and 1986 is most likely inflated.

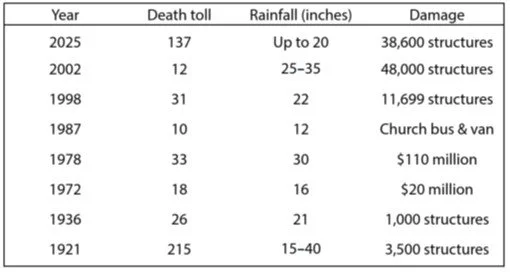

Second, heavier rainfall does not necessarily lead to an increase in extreme flooding probability, which depends on other factors such as rainfall duration, landscape and the type of river basin. In fact, disastrous floods in Texas’ Flash Flood Alley are nothing new and have caused devastation for more than a century, as shown in the following table.

As my report discusses, extreme event attribution was developed because of the inability of the IPCC (Intergovernmental Panel on Climate Change) to achieve high or even medium confidence in the detection and attribution of most types of extreme weather events. This has been politically problematic for climate activists, who have therefore pushed for rapid extreme event attribution in order to pursue climate litigation against fossil fuel companies.

The origins of this climate lawfare date back to a 2012 meeting of U.S. environmental advocates, climate scientists and others, which attempted to mimic strategies from the 1960s campaign against smoking tobacco.

More details of several studies and of the history can be found in the report itself.

Next: The Evidence Shows We Are Nowhere Near a Climate Tipping Point, As Alarmists Claim